I build things, break things, and write what I learn.

Currently exploring:

- → LLMs

- → DevOps

- → Systems

LLM Data Preprocessing

LLM Data Preprocessing Preprocessing text data is one of the most important steps in training Large Language Models (LLMs). The first step in this process is tokenization — the act of converting raw …

LLM Introduction

What is an LLM? LLM stands for Large Language Model. An LLM is a type of artificial intelligence model designed to understand, generate, and interact using human language. It learns patterns, …

Positional & Input Embeddings (Data Preprocessing)

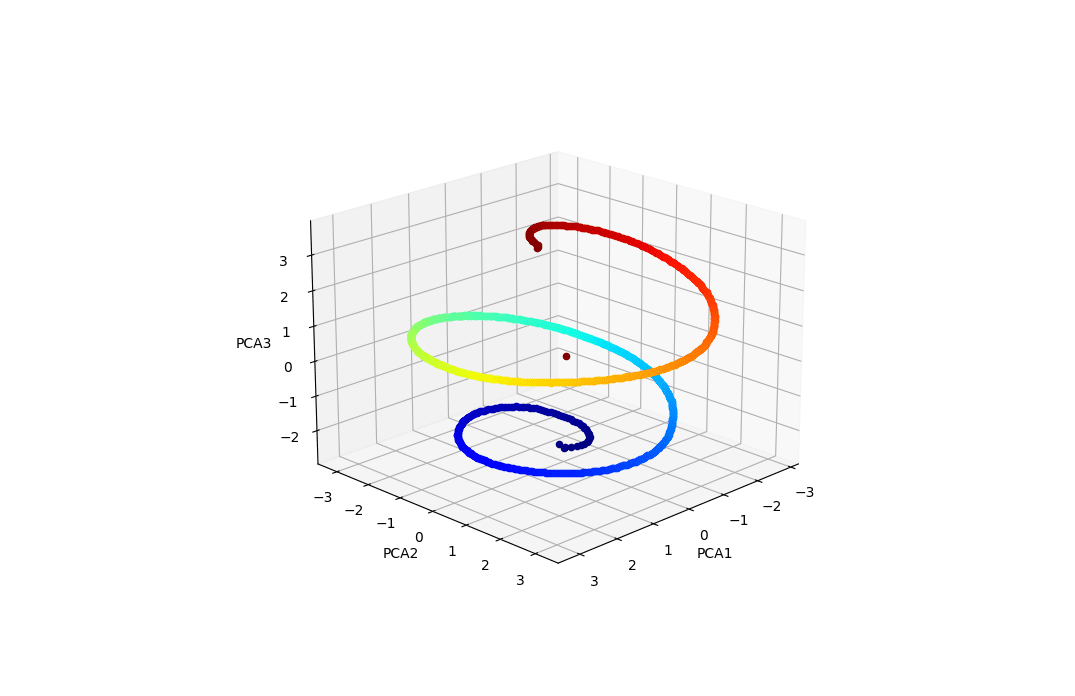

📘3. Positional Embeddings 🧠 Why Do We Need Positional Embeddings? In embedding layer, the same tokens get mapped to the same vector representation. That means the model naturally has no idea about …

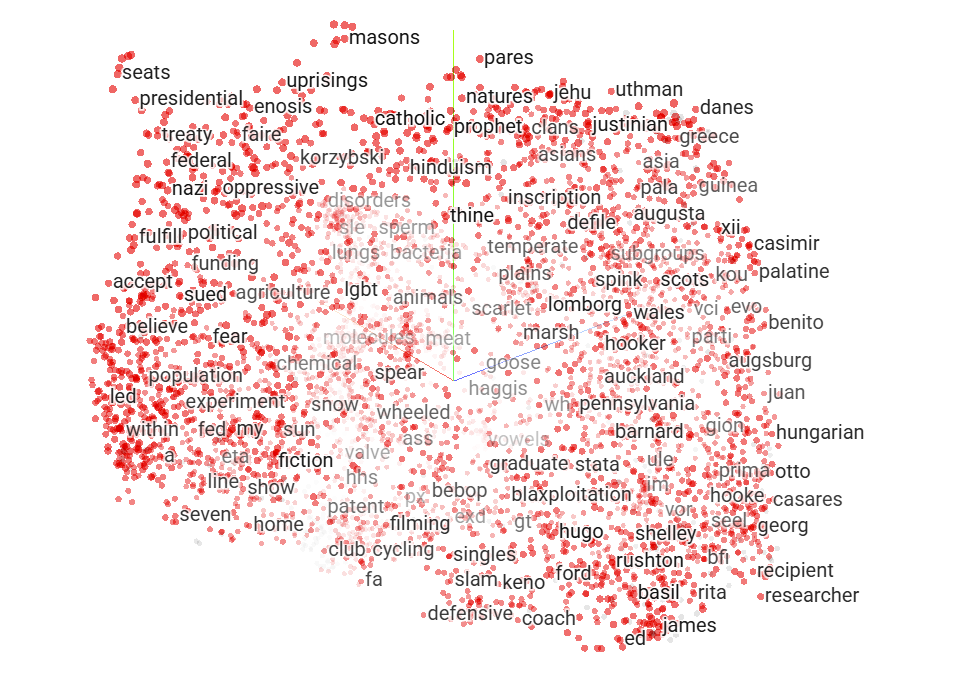

Token Embeddings (Data Preprocessing)

🧠2.Token Embeddings in LLMs They’re also often called vector embeddings or word embeddings. 🔤 From Tokens to Token Embeddings Before a model can “read” anything, it first breaks text into smaller …